It started with an unmistakable screeching sound. The lab technicians were puzzled and alarmed. Their highly sensitive centrifuges were spinning out of control and destroying themselves. What on earth was going on?

Spinning Around

At Natanz, Iran’s nuclear scientists worked in total secrecy, racing against the clock to produce enough enriched uranium for a warhead, before their enemies in Tel Aviv could foil their ambitions. But unbeknownst to them, they’d already lost the race. Thousands of miles away, another group of scientists had been working on their own top secret weapons project: a computer virus that would come to be known as “Stuxnet” (Zetter, 2014). It was already inside Natanz, quietly wreaking havoc on Iran’s nuclear centrifuges.

The Weakest Link

The creators of Stuxnet were cunning and technologically savvy, but they faced a seemingly insurmountable obstacle: How they could get their deadly payload inside an Iranian nuclear weapons plant. Unless that problem could be bridged, Stuxnet was useless. So how did they do it?

The answer illustrates a profound and often-overlooked truth about cybersecurity: Humans are almost always the weakest link. The world’s most sophisticated malware, a marvel of technological ingenuity, entered Natanz on a simple USB drive, probably plugged in by an unwitting employee.

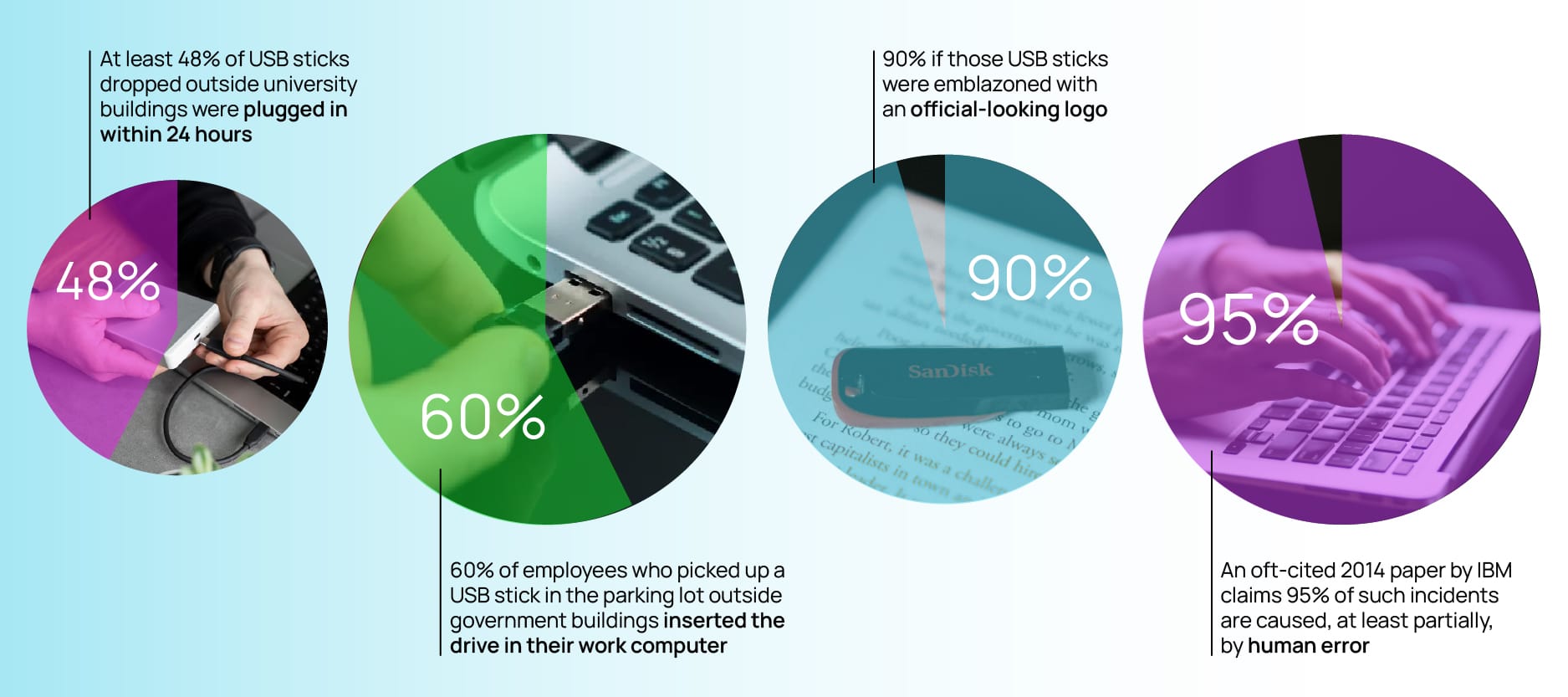

But before you mock the hapless Iranian contractor who inadvertently sabotaged his or her country’s nuclear program, consider a study at the University of Illinois, Urbana-Champaign campus which found that at least 48% of USB sticks dropped outside university buildings were plugged in within 24 hours (Tischer et al., 2016). A similar study conducted by the US Department of Homeland Security found that 60% of employees who picked up a USB stick in the parking lot outside government buildings inserted the drive in their work computer (Edwards et al., 2011). That figure rose to an astonishing 90% if those USB sticks were emblazoned with an official-looking logo.

It’s not just USBs. Numerous studies illustrate that human error is a major contributory factor in data breaches and other cyber incidents. An oft-cited 2014 paper by IBM claims 95% of such incidents are caused, at least partially, by human error.

While cybersecurity is often thought of as a technological arms race between competing programmers, it’s at least as much a human problem. Successful scammers–whether working for criminal gangs or national intelligence agencies–have a good understanding of human nature and the frailties of human perception and decision-making.

Under Pressure

Psychologists, behavioral scientists, and neuroscientists have long known that vast swathes of human thinking occur quickly and automatically. Nobel Laureate Daniel Kahneman and others use the metaphor of System 1 and System 2 to illustrate the difference between thinking fast (i.e. automatically) and thinking slow (i.e. deliberately).

While fast, automatic thinking is effective much of the time and has certainly proved evolutionarily adaptive, it can make us vulnerable and prone to biases too. Such automatic thinking often relies on mental heuristics and simple rules-of-thumb. Instead of solving the difficult problem of what to do in a given situation, we often solve an easier problem instead. What do I normally do? What’s the easiest thing to do? What’s everyone else doing? What feels good? Or as in the case of a USB drive, what will satisfy my curiosity?

Salespeople have long been aware that quick decisions aren’t always the best decisions; limited time offers are one means of ramping up time pressure, but there are many more nefarious versions of the same technique. Crucially, scammers often combine time pressure with additional stressors: fake warnings of illicit account activity, missing parcels, endangered loved ones, or impending investigations by law enforcement.

In the cold light of day, when one is thinking calmly and logically, these warnings are often patently absurd. Yet in the moment, seemingly racing against the clock–with fear and anxiety heightened by apparently threatening circumstances–decisions to click on links, input bank details, or provide personal data seem all too compelling.

A Very Human Problem

Perceptual flaws, automatic decision-making, and the influence of stress hormones on judgement are all intrinsically human foibles. For cybersecurity professionals tasked with protecting individuals and organizations, this poses a conundrum: How do you fight against human nature? Traditionally, cybersecurity efforts have offered two answers to that question.

The first acknowledges the difficulty of fighting our biology–pessimistically, but accurately, assuming that, well, humans will be humans (and make mistakes). This line of thinking leads to hard, technological controls: regularly changing and ever-more-complex passwords, stricter access authorization, disabling USB ports, firewalls, virus scanning, and the like.

The second tends to ignore the reality of human nature altogether, relying instead on some pervasive (but inaccurate) assumptions about human behavior. The resulting solutions are common to organizational life: raising awareness through communications and online training. The underlying assumption seems to be that if you inform people and get them to “care enough,” the desired actions will follow. However, a mountain of evidence tells us that logic, reason, and abstract learning are unlikely to provide much defense when people face time pressure and ingrained habits kick in.

So what can leaders do to reduce the likelihood of cybersecurity disasters, like the one that hit the Iranian nuclear facility at Natanz?

5 Foundational Steps

To address this question, we recommend turning to behavioral science–a field that helps us better understand and influence human decision making–and exploring five paths that have consistently proven successful in mitigating human risk:

- Define a specific set of desired behaviors

In many organizations, the conversation remains at the broad level of “cybersecurity.” And of course, nearly everyone nods their head and agrees that cybersecurity is important. However, for these positive intentions to consistently translate into actions, we need to move from generalities to a set of specific, clearly-defined security behaviors (not clicking on unknown links, not sharing passwords, not reusing old templates, etc.). It is only at this level that we can begin to develop interventions to facilitate and reinforce safe habits. - Remind people at the right time

Too often, cybersecurity training takes the form of extended in-person sessions and/or an endless parade of online modules. While these approaches may “check the box” (in terms of conveying expectations, policies and procedures), they are largely ineffective in driving action, due to human limitations in absorbing and retaining new information. In fact, the sobering reality of the “forgetting curve” (Ebbinghaus, 1885) is that over 90% of what we learn (in classrooms or online) is forgotten within a week.

To combat this, leaders need to think of cybersecurity training as a starting point–rather than an end in itself–and place an equal emphasis on developing reminder systems to reinforce key behaviors. These reminders can take a multitude of forms, from physical stickers and “cheat sheets” to timely pop-ups. Ideally, they will be well-integrated into the work processes and/or environment of an organization, so that they become automatic, rather than “something extra” for people to remember and act upon. - Make it social (team-oriented)

Many organizations make the mistake of targeting cybersecurity communications, training, and support solely at the individual level. This approach misses an enormous opportunity to leverage the power of social forces to promote positive, safe behaviors. In fact, integrating some component of team-based learning–possibly through periodic moderated discussions of cybersecurity lessons, issues, and real-world challenges–can provide many benefits:

- Team-based learning can make training more relevant & memorable (via group discussion & linkage to real-world issues)

- Team-based learning can promote adherence to procedures (via social norms, accountability and/or healthy competition among teams)

- Team-based learning can foster psychological safety (via open discussion of challenges and potential solutions)

Most importantly, team-based activities can foster a shared commitment. So while individual humans are the weakest link in cybersecurity, effective teams can be a powerful antidote. - Provide real-time support

While many cybersecurity breaches are careless and inadvertent, others are deliberate decisions (to bypass protocols) brought on by time-pressure and deadlines. For example, on a recent project, we found that skilled, knowledgeable programmers were occasionally resorting to unsafe work-arounds to “get things done,” when the company’s approved systems and processes were inaccessible or perceived to be too slow and cumbersome. At these “moments of frustration” (and enterprise risk), employees need immediate guidance, rather than extended multi-step tech support processes.

Fortunately, a wide variety of solutions now exist, from AI-enabled chatbots to 24/7 helplines. But whether the support is human or technical, a critical challenge is to drive usage, through timely reminders, easy access, and positive reinforcement. Because unfortunately, even small “frictions” (or minor inconveniences/disappointments) can drive people away from taking the (uncomfortable) step of asking for help. - Emphasize positive reinforcement

Many cybersecurity teams are so focused on policing the (minority) of bad actors in their organizations that they neglect the vast majority with positive intent. These people want to “do the right thing,” but many of them need some help to make that happen. Behavioral science teaches us that one of our most powerful tools is positive reinforcement. Interestingly, this doesn’t have to involve bonuses or tangible rewards, although they can be effective.

Instead, it’s a matter of making people feel good when they follow the rules, perhaps with a simple acknowledgement (e.g., “Thanks for protecting our data”). When we do this consistently and immediately–and combine our efforts with visible cues or reminders to trigger the action–we can create positive “habit loops” to reinforce new behaviors and instill safe habits, which is ultimately the key to reducing human risk.

Designing for Humans

Unfortunately, there’s no “silver bullet” or single clever solution to eliminate human risk; even extremely smart, dedicated, informed people–like the Iranian nuclear scientists working at Natanz–are occasionally susceptible to moments of inattention (and/or to falling victim to their own curiosity).

Acknowledging this reality–while recognizing that the overwhelming majority of employees have positive intentions–is the most important starting point for leaders. From here, we can develop policies, procedures, and tools with human nature (and limitations) in mind.

- Rather than overwhelming people with information and complexity, we can focus on making it easier for them to “do the right thing.”

- Rather than relying solely on surveillance and policing, we can emphasize positive feedback and habit formation.

- Rather than depending only on technological solutions, we can help create a culture of compliance in which social norms and shared commitment serve to reinforce and reward safety and security.

Organizations that adopt this approach–and invest in building behaviorally-informed processes, reminders and reinforcements–will be rewarded with fewer human risk crises, like those that doomed the centrifuges at Natanz.

References

Edwards, C., Kharif, O. and Riley, M. (2011). Human Errors Fuel Hacking as Test Shows Nothing Stops Idiocy. Bloomberg.com. https://www.bloomberg.com/news/articles/2011-06-27/human-errors-fuel-hacking-as-test-shows-nothing-prevents-idiocy?embedded-checkout=true

Ebbinghaus, H. (1885). Memory: A Contribution to Experimental Psychology. H. A. Ruger & C. E. Bussenius, Trans., Teachers College.

IBM Security Services 2014 Cyber Security Intelligence Index. (2014).IBM Global Technology Services, 1–12. https://i.crn.com/sites/default/files/ckfinderimages/userfiles/images/crn/custom/IBMSecurityServices2014.PDF.

Kahneman, D. (2011). Thinking, Fast and Slow. Farrar, Straus and Giroux.

Tischer, M., Durumeric, Z., Foster, S., Duan, S., Mori, A., Bursztein, E., & Bailey, M. (2016). Users Really Do Plug in USB Drives They Find. IEEE Symposium on Security and Privacy (SP), 306–319. https://doi.org/10.1109/sp.2016.26

Zetter, K. (2014, November 3). An Unprecedented Look at Stuxnet, the World’s First Digital Weapon. Wired. https://www.wired.com/2014/11/countdown-to-zero-day-stuxnet/